GSA SER Verified Lists Vs Scraping

The Core Difference Between GSA SER Verified Lists and Scraping

Every seasoned GSA SER user eventually faces the same strategic decision: should you build campaigns on pre-tested, read more curated target lists or let the software generate its own opportunities from scratch? The debate around GSA SER verified lists vs scraping is not about right and wrong but about speed, control, and long-term efficiency. Understanding when to use each approach can dramatically alter your success rates and server resource consumption.

What Exactly Are GSA SER Verified Lists?

A verified list is a ready-made collection of URLs that have already passed through GSA SER’s identification and submission process. These targets are known to accept registrations, logins, or posts. They are typically exported after a successful run, cleaned of duplicates and dead links, and shared or sold in marketplaces. The value proposition is instant action: you load the list, and your software begins posting immediately without spending time searching for platforms.

These lists are often categorized by platform type—article directories, social bookmarks, web 2.0 properties, wikis, and comment forms. High-quality verified lists can produce links within minutes, making them incredibly tempting for new projects. However, they come with inherent limitations. A static list decays over time as sites go offline, change their platforms, or tighten moderation. What worked yesterday might not work today, and unless you constantly re-verify, your success rate will drop steadily.

The Scraping Alternative: Fresh Discovery on the Fly

Scraping, in the context of GSA SER, is the process of harvesting new target URLs from search engines using queries tied to specific footprints. Instead of relying on a pre-existing list, the software sends queries to Google, Bing, or custom search engines, parses the results, identifies potential platforms, and attempts to register or post. This method is fully dynamic. Every campaign run can theoretically find brand-new domains that no competitor has touched yet.

The strength of scraping is unlimited scale and perpetual freshness. You are not bound by the size of a purchased list. With the right proxies, search engine rotation, and footprint configurations, your scraping engine can pull in thousands of unique targets per hour. More importantly, you can laser-target specific niches, languages, and platform types by fine-tuning your search queries. The downside is resource intensity. Scraping demands considerable CPU, memory, and especially a large pool of high-quality proxies to avoid IP bans from search engines. It also introduces latency—before you can post a single link, the software must search, parse, filter, and test each target.

Speed and Immediate Output: Verified Lists Win the Sprint

When you need links quickly—for a time-sensitive tiered campaign, a parasite SEO page, or a fresh money site that needs an initial boost—nothing beats importing a well-maintained verified list. The software skips the entire discovery phase. Threads move directly to submission, which means you can achieve hundreds or even thousands of verified links in the first hours after starting a project. For aggressive link building where volume matters, this immediate gratification is powerful.

However, speed can be deceptive. If a verified list has been heavily circulated, your links will land on domains that have already been hammered by hundreds of other users. The “juice†from such links is diluted. Search engines may have deindexed or penalized many of those pages already. A sprint start can turn into a crawl if the link quality is poor and you are simply building a footprint of spam that gets ignored.

Quality, Uniqueness, and Footprint Control: Scraping Takes the Lead

The core advantage of scraping in the GSA SER verified lists vs scraping comparison is link diversity and footprint reduction. Since the targets are freshly discovered, you are naturally building a backlink profile that is more random and therefore more natural in appearance. You have granular control over the platforms you target—you can exclude certain CMS types, filter by domain authority metrics, and focus on regions with specific languages. This customization is impossible with a static list built by someone else with unknown filtering criteria.

Scraping also lets you tap into the massive, unexhausted tail of the internet. While public verified lists tend to concentrate on popular and easily identifiable platforms, a carefully crafted scrape can find obscure blogs, forums, and self-hosted community sites that have never seen automated link building. These gems often survive algorithm updates because they are on real, moderated websites that happen to allow profiles or comments. The effort is higher, but the payoff in link sustainability is far greater.

The Resource Equation: Cost, Proxies, and Server Load

Using verified lists is resource-light. You can run campaigns on modest hardware without an extensive proxy setup because you are not constantly querying search engines. A handful of proxies for posting is usually sufficient. This makes verified lists attractive for users with limited budgets or those running smaller, targeted projects.

Scraping is a power-hungry beast. To do it at scale, you need dedicated proxies that are whitelisted on search engines, robust captcha solving services, and a server with enough threads to handle the immense volume of search requests. The proxy cost alone can be prohibitive if you are not careful. Additionally, scraping adds wear and tear to your overall setup, and poorly tuned queries can quickly burn through proxy IPs without returning many usable targets. The investment only makes sense if you have a strategy to convert the flood of discovered targets into solid links efficiently.

Combining Both Methods for Maximum Efficiency

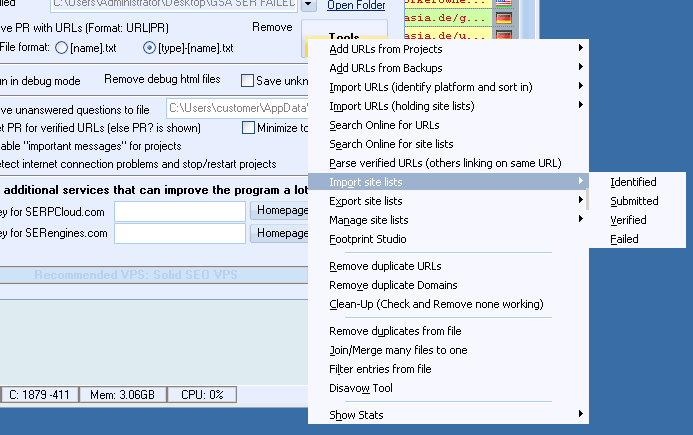

The most experienced GSA SER users rarely rely solely on one method. A hybrid approach often yields the best results. Start with a fresh scrape to build a large, clean list of platforms that match your exact criteria. Then, verify and deduplicate that list thoroughly. The resulting collection becomes your private, semi-verified asset. Use it for rapid initial submissions across multiple projects, while keeping a background scraping engine running to constantly replenish the list with new domains as old ones die.

This strategy captures the speed advantage of a list without suffering the staleness problem of a public one. You control the quality from day one, and your campaigns inherit your unique target profile rather than a generic set leaked across forums. The process can be automated inside GSA SER by using the global site lists in combination with scheduled scraping tasks, so you never have to choose permanently between operating modes.

Making the Right Choice for Your Project

Selecting between GSA SER verified lists vs scraping ultimately depends on your immediate goals, technical comfort, and budget. If you are building low-tier buffer links, running a quick test, or lack the proxy infrastructure for massive scraping, a fresh verified list from a reputable source is a pragmatic shortcut. Just remember to re-verify it frequently and accept the lower ceiling on long-term value.

If you are constructing a foundational tier-1 network, managing a long-term PBN project, or working in a competitive niche where link profiles are scrutinized, scraping is non-negotiable. Invest in the proxies, refine your footprints, and let the machine discover opportunities that no one else has processed repeatedly. The upfront cost in time and money will translate into a healthier, more resilient backlink graph that survives manual reviews and algorithmic shifts. For most serious practitioners, the real answer is not one or the other, but a disciplined, automated fusion that keeps the pipeline both fast and fresh.